Algorithms are everywhere. They sort and separate the winners from the losers. The winners get the job or a good credit card offer. The losers don't even get an interview or they pay more for insurance. We're being scored with secret formulas that we don't understand that often don't have systems of appeal. That begs the question: What if the algorithms are wrong?

演算法無所不在。 它們能把贏家和輸家區分開來。 贏家能得到工作, 或是好的信用卡方案。 輸家連面試的機會都沒有, 或是他們的保險費比較高。 我們都被我們不了解的 秘密方程式在評分, 且那些方程式通常 都沒有申訴體制。 問題就來了: 如果演算法是錯的怎麼辦?

To build an algorithm you need two things: you need data, what happened in the past, and a definition of success, the thing you're looking for and often hoping for. You train an algorithm by looking, figuring out. The algorithm figures out what is associated with success. What situation leads to success?

要建立一個演算法,需要兩樣東西: 需要資料,資料是過去發生的事, 還需要對成功的定義, 也就是你在找的東西、 你想要的東西。 你透過尋找和計算的方式 來訓練一個演算法。 演算法會算出什麼和成功有相關性。 什麼樣的情況會導致成功?

Actually, everyone uses algorithms. They just don't formalize them in written code. Let me give you an example. I use an algorithm every day to make a meal for my family. The data I use is the ingredients in my kitchen, the time I have, the ambition I have, and I curate that data. I don't count those little packages of ramen noodles as food.

其實,人人都在用演算法。 他們只是沒把演算法寫為程式。 讓我舉個例子。 我每天都用演算法 來為我的家庭做飯。 我用的資料 是我廚房中的原料、 我擁有的時間、 我的野心、 我把這些資料拿來做策劃。 我不把那一小包小包的 拉麵條視為是食物。

(Laughter)

(笑聲)

My definition of success is: a meal is successful if my kids eat vegetables. It's very different from if my youngest son were in charge. He'd say success is if he gets to eat lots of Nutella. But I get to choose success. I am in charge. My opinion matters. That's the first rule of algorithms.

我對成功的定義是: 如果我的孩子吃了蔬菜, 這頓飯就算成功。 但如果我的小兒子主導時 一切就不同了。 他會說,如果能吃到很多 能多益(巧克力榛果醬)就算成功。 但我能選擇什麼才算成功。 我是主導的人,我的意見才重要。 那是演算法的第一條規則。

Algorithms are opinions embedded in code. It's really different from what you think most people think of algorithms. They think algorithms are objective and true and scientific. That's a marketing trick. It's also a marketing trick to intimidate you with algorithms, to make you trust and fear algorithms because you trust and fear mathematics. A lot can go wrong when we put blind faith in big data.

演算法是被嵌入程式中的意見。 這和你認為大部份人 對演算法的看法很不一樣。 他們認為演算法是 客觀的、真實的、科學的。 那是種行銷技倆。 還有一種行銷技倆是 用演算法來威脅你, 讓你相信並懼怕演算法, 因為你相信並懼怕數學。 當我們盲目相信大數據時, 很多地方都可能出錯。

This is Kiri Soares. She's a high school principal in Brooklyn. In 2011, she told me her teachers were being scored with a complex, secret algorithm called the "value-added model." I told her, "Well, figure out what the formula is, show it to me. I'm going to explain it to you." She said, "Well, I tried to get the formula, but my Department of Education contact told me it was math and I wouldn't understand it."

這位是琦莉索瑞斯, 她是布魯克林的高中校長。 2011 年,她告訴我, 用來評分她的老師的演算法 是一種複雜的秘密演算法, 叫做「加值模型」。 我告訴她:「找出那方程式 是什麼,給我看, 我就會解釋給你聽。」 她說:「嗯,我試過取得方程式了, 但教育部聯絡人告訴我, 那方程式是數學, 我也看不懂的。」

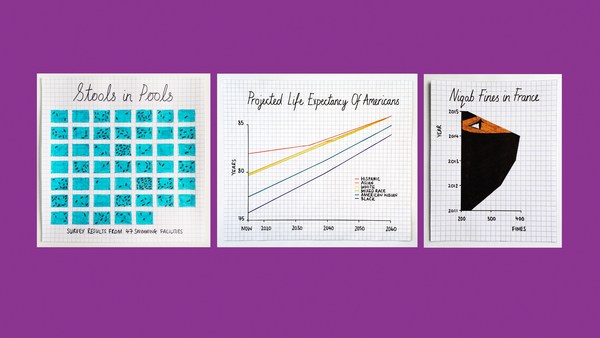

It gets worse. The New York Post filed a Freedom of Information Act request, got all the teachers' names and all their scores and they published them as an act of teacher-shaming. When I tried to get the formulas, the source code, through the same means, I was told I couldn't. I was denied. I later found out that nobody in New York City had access to that formula. No one understood it. Then someone really smart got involved, Gary Rubinstein. He found 665 teachers from that New York Post data that actually had two scores. That could happen if they were teaching seventh grade math and eighth grade math. He decided to plot them. Each dot represents a teacher.

還有更糟的。 紐約郵報提出了一項 資訊自由法案的請求, 取得有所有老師的名字 以及他們的分數, 郵報把這些都刊出來, 用來羞辱老師。 當我試著透過同樣的手段 來找出方程式、原始碼, 我被告知我不可能辦到。 我被拒絕了。 我後來發現, 紐約市中沒有人能取得那方程式。 沒有人了解它。 有個很聰明的人介入: 蓋瑞魯賓斯坦。 他發現紐約郵報資料中 有 665 名老師 其實有兩個分數。 如果他們是在教七年級 及八年級數學,是有可能發生。 他決定把他們用圖畫出來。 每一個點代表一個老師。

(Laughter)

(笑聲)

What is that?

那是什麼?

(Laughter)

(笑聲)

That should never have been used for individual assessment. It's almost a random number generator.

那絕對不該被用來做個人評估用。 它幾乎就是個隨機數產生器。

(Applause)

(掌聲)

But it was. This is Sarah Wysocki. She got fired, along with 205 other teachers, from the Washington, DC school district, even though she had great recommendations from her principal and the parents of her kids.

但它的確被用了。 這是莎拉薇沙琪, 她和其他 205 名老師都被開除了, 都是在華盛頓特區的學區, 即使她有校長及 學童家長的強力推薦, 還是被開除了。

I know what a lot of you guys are thinking, especially the data scientists, the AI experts here. You're thinking, "Well, I would never make an algorithm that inconsistent." But algorithms can go wrong, even have deeply destructive effects with good intentions. And whereas an airplane that's designed badly crashes to the earth and everyone sees it, an algorithm designed badly can go on for a long time, silently wreaking havoc.

我很清楚你們在想什麼, 特別是這裡的資料科學家 及人工智慧專家。 你們在想:「我絕對不會寫出 那麼不一致的演算法。」 但演算法是可能出錯的, 即使出自好意 仍可能產生毀滅性的效應。 設計得很糟的飛機墜機, 每個人都會看到; 可是,設計很糟的演算法, 可以一直運作很長的時間, 靜靜地製造破壞或混亂。

This is Roger Ailes.

這位是羅傑艾爾斯。

(Laughter)

(笑聲)

He founded Fox News in 1996. More than 20 women complained about sexual harassment. They said they weren't allowed to succeed at Fox News. He was ousted last year, but we've seen recently that the problems have persisted. That begs the question: What should Fox News do to turn over another leaf?

他在 1996 年成立了 Fox News。 有超過二十位女性投訴性騷擾。 她們說,她們在 Fox News 不被允許成功。 他去年被攆走了,但我們看到近期 這個問題仍然存在。 這就帶來一個問題: Fox News 該做什麼才能改過自新?

Well, what if they replaced their hiring process with a machine-learning algorithm? That sounds good, right? Think about it. The data, what would the data be? A reasonable choice would be the last 21 years of applications to Fox News. Reasonable. What about the definition of success? Reasonable choice would be, well, who is successful at Fox News? I guess someone who, say, stayed there for four years and was promoted at least once. Sounds reasonable. And then the algorithm would be trained. It would be trained to look for people to learn what led to success, what kind of applications historically led to success by that definition. Now think about what would happen if we applied that to a current pool of applicants. It would filter out women because they do not look like people who were successful in the past.

如果他們把僱用的流程換掉, 換成機器學習演算法呢? 聽起來很好,對嗎? 想想看。 資料,資料會是什麼? 一個合理的選擇會是 Fox News 過去 21 年間收到的申請。 很合理。 成功的定義呢? 合理的選擇會是, 在 Fox News 有誰是成功的? 我猜是在那邊待了四年、 且至少升遷過一次的人。 聽起來很合理。 接著,演算法就會被訓練。 它會被訓練來找人, 尋找什麼導致成功, 在過去怎樣的申請書會導致成功, 用剛剛的成功定義。 想想看會發生什麼事, 如果我們把它用到 目前的一堆申請書上。 它會把女性過濾掉, 因為在過去,女性 並不像是會成功的人。

Algorithms don't make things fair if you just blithely, blindly apply algorithms. They don't make things fair. They repeat our past practices, our patterns. They automate the status quo. That would be great if we had a perfect world, but we don't. And I'll add that most companies don't have embarrassing lawsuits, but the data scientists in those companies are told to follow the data, to focus on accuracy. Think about what that means. Because we all have bias, it means they could be codifying sexism or any other kind of bigotry.

如果只是漫不經心、 盲目地運用演算法, 它們並不會讓事情變公平。 演算法不會讓事情變公平。 它們會重覆我們過去的做法, 我們的模式。 它們會把現狀給自動化。 如果我們有個完美的 世界,那就很好了, 但世界不完美。 我還要補充,大部份公司 沒有難堪的訴訟, 但在那些公司中的資料科學家 被告知要遵從資料, 著重正確率。 想想那意味著什麼。 因為我們都有偏見,那就意味著, 他們可能會把性別偏見 或其他偏執給寫到程式中,

Thought experiment, because I like them: an entirely segregated society -- racially segregated, all towns, all neighborhoods and where we send the police only to the minority neighborhoods to look for crime. The arrest data would be very biased. What if, on top of that, we found the data scientists and paid the data scientists to predict where the next crime would occur? Minority neighborhood. Or to predict who the next criminal would be? A minority. The data scientists would brag about how great and how accurate their model would be, and they'd be right.

來做個思想實驗, 因為我喜歡思想實驗: 一個完全種族隔離的社會, 所有的城鎮、所有的街坊 都做了種族隔離, 我們只會針對少數種族 住的街坊派出警力 來尋找犯罪。 逮捕的資料會非常偏頗。 如果再加上,我們 找到了資料科學家, 付錢給他們,要他們預測下次 犯罪會發生在哪裡,會如何? 答案:少數種族的街坊。 或是去預測下一位犯人會是誰? 答案:少數族裔。 資料科學家會吹噓他們的的模型 有多了不起、多精準, 他們是對的。

Now, reality isn't that drastic, but we do have severe segregations in many cities and towns, and we have plenty of evidence of biased policing and justice system data. And we actually do predict hotspots, places where crimes will occur. And we do predict, in fact, the individual criminality, the criminality of individuals. The news organization ProPublica recently looked into one of those "recidivism risk" algorithms, as they're called, being used in Florida during sentencing by judges. Bernard, on the left, the black man, was scored a 10 out of 10. Dylan, on the right, 3 out of 10. 10 out of 10, high risk. 3 out of 10, low risk. They were both brought in for drug possession. They both had records, but Dylan had a felony but Bernard didn't. This matters, because the higher score you are, the more likely you're being given a longer sentence.

現實沒那麼極端,但在許多 城鎮和城市中,我們的確有 嚴重的種族隔離, 我們有很多證據可證明 執法和司法資料是偏頗的。 我們確實預測了熱點, 犯罪會發生的地方。 事實上,我們確實預測了 個別的犯罪行為, 個人的犯罪行為。 新聞組織 ProPublica 近期調查了 「累犯風險」演算法之一, 他們是這麼稱呼它的, 演算法被用在佛羅里達, 法官在判刑時使用。 左邊的黑人是伯納, 總分十分,他得了十分。 右邊的狄倫,十分只得了三分。 十分就得十分,高風險。 十分只得三分,低風險。 他們都因為持有藥品而被逮捕。 他們都有犯罪記錄, 但狄倫犯過重罪, 伯納則沒有。 這很重要,因為你的得分越高, 你就越可能被判比較長的徒刑。

What's going on? Data laundering. It's a process by which technologists hide ugly truths inside black box algorithms and call them objective; call them meritocratic. When they're secret, important and destructive, I've coined a term for these algorithms: "weapons of math destruction."

發生了什麼事? 洗資料。 它是個流程,即技術專家 用黑箱作業的演算法 來隱藏醜陋的真相, 還宣稱是客觀的; 是精英領導的。 我為這些秘密、重要、 又有毀滅性的演算法取了個名字: 「毀滅性的數學武器」。

(Laughter)

(笑聲)

(Applause)

(掌聲)

They're everywhere, and it's not a mistake. These are private companies building private algorithms for private ends. Even the ones I talked about for teachers and the public police, those were built by private companies and sold to the government institutions. They call it their "secret sauce" -- that's why they can't tell us about it. It's also private power. They are profiting for wielding the authority of the inscrutable. Now you might think, since all this stuff is private and there's competition, maybe the free market will solve this problem. It won't. There's a lot of money to be made in unfairness.

它們無所不在,且不是個過失。 私人公司建立私人演算法, 來達到私人的目的。 即使是我剛談到 對老師和警方用的演算法, 也是由私人公司建立的, 然後再銷售給政府機關。 他們稱它為「秘方醬料」, 所以不能跟我們討論它。 它也是種私人的權力。 他們透過行使別人 無法理解的權威來獲利。 你可能會認為, 所有這些都是私人的, 且有競爭存在, 也許自由市場會解決這個問題。 並不會。 從不公平中可以賺取很多錢。

Also, we're not economic rational agents. We all are biased. We're all racist and bigoted in ways that we wish we weren't, in ways that we don't even know. We know this, though, in aggregate, because sociologists have consistently demonstrated this with these experiments they build, where they send a bunch of applications to jobs out, equally qualified but some have white-sounding names and some have black-sounding names, and it's always disappointing, the results -- always.

且,我們不是經濟合法代理人。 我們都有偏見。 我們都是種族主義的、偏執的, 即使我們也希望不要這樣, 我們甚至不知道我們是這樣的。 不過我們確實知道,總的來說, 因為社會學家不斷地用 他們建立的實驗 來展現出這一點, 他們寄出一大堆的工作申請書, 都有同樣的資格, 但有些用白人人名, 有些用黑人人名, 結果總是讓人失望的,總是如此。

So we are the ones that are biased, and we are injecting those biases into the algorithms by choosing what data to collect, like I chose not to think about ramen noodles -- I decided it was irrelevant. But by trusting the data that's actually picking up on past practices and by choosing the definition of success, how can we expect the algorithms to emerge unscathed? We can't. We have to check them. We have to check them for fairness.

所以,我們才是有偏見的人, 且我們把這些偏見注入演算法中, 做法是選擇要收集哪些資料、 比如我選擇不要考量拉麵, 我決定它不重要。 但透過相信這些資料 真的能了解過去的做法, 以及透過選擇成功的定義, 我們如何能冀望產生的演算法未受損? 不能。我們得要檢查這些演算法。 我們得要檢查它們是否公平。

The good news is, we can check them for fairness. Algorithms can be interrogated, and they will tell us the truth every time. And we can fix them. We can make them better. I call this an algorithmic audit, and I'll walk you through it.

好消息是,我們可以 檢查它們是否公平。 演算法可以被審問, 且它們每次都會告訴我們真相。 我們可以修正它們, 我們可以把它們變更好。 我稱這個為演算法稽核, 我會帶大家來了解它。

First, data integrity check. For the recidivism risk algorithm I talked about, a data integrity check would mean we'd have to come to terms with the fact that in the US, whites and blacks smoke pot at the same rate but blacks are far more likely to be arrested -- four or five times more likely, depending on the area. What is that bias looking like in other crime categories, and how do we account for it?

首先,檢查資料完整性。 針對我先前說的累犯風險演算法, 檢查資料完整性就意味著 我們得接受事實, 事實是,在美國,白人和黑人 抽大麻的比率是一樣的, 但黑人被逮捕的機率遠高於白人, 四、五倍高的可能性被捕, 依地區而異。 在其他犯罪類別中, 那樣的偏見會如何呈現? 我們要如何處理它?

Second, we should think about the definition of success, audit that. Remember -- with the hiring algorithm? We talked about it. Someone who stays for four years and is promoted once? Well, that is a successful employee, but it's also an employee that is supported by their culture. That said, also it can be quite biased. We need to separate those two things. We should look to the blind orchestra audition as an example. That's where the people auditioning are behind a sheet. What I want to think about there is the people who are listening have decided what's important and they've decided what's not important, and they're not getting distracted by that. When the blind orchestra auditions started, the number of women in orchestras went up by a factor of five.

第二,我們要想想成功的定義, 去稽核它。 記得我們剛剛談過的僱用演算法嗎? 待了四年且升遷至少一次? 那就是個成功員工, 但那也是個被其文化所支持的員工。 儘管如此,它也可能很有偏見。 我們得把這兩件事分開。 我們應該要把交響樂團的盲眼甄選 當作參考範例。 他們的做法是讓試演奏的人 在布幕後演奏。 我想探討的重點是 那些在聽並且決定什麼重要的人, 他們也會決定什麼不重要 , 他們不會被不重要的部份給分心。 當交響樂團開始採用盲眼甄選, 團內的女性成員數上升五倍。

Next, we have to consider accuracy. This is where the value-added model for teachers would fail immediately. No algorithm is perfect, of course, so we have to consider the errors of every algorithm. How often are there errors, and for whom does this model fail? What is the cost of that failure?

接著,我們要考量正確率。 這就是老師的加值模型 立刻會出問題的地方。 當然,沒有演算法是完美的, 所以我們得要考量 每個演算法的錯誤。 多常會出現錯誤、這個模型 針對哪些人會發生錯誤? 發生錯誤的成本多高?

And finally, we have to consider the long-term effects of algorithms, the feedback loops that are engendering. That sounds abstract, but imagine if Facebook engineers had considered that before they decided to show us only things that our friends had posted.

最後,我們得要考量 演算法的長期效應, 也就是產生出來的反饋迴圈。 那聽起來很抽象, 但想像一下,如果臉書的工程師 決定只讓我們看到朋友的貼文 之前就先考量那一點。

I have two more messages, one for the data scientists out there. Data scientists: we should not be the arbiters of truth. We should be translators of ethical discussions that happen in larger society.

我還有兩個訊息要傳遞, 其一是給資料科學家的。 資料科學家,我們 不應該是真相的仲裁者, 我們應該是翻譯者, 翻譯大社會中發生的每個道德討論。

(Applause)

(掌聲)

And the rest of you, the non-data scientists: this is not a math test. This is a political fight. We need to demand accountability for our algorithmic overlords.

至於你們其他人, 不是資料科學家的人: 這不是個數學考試。 這是場政治鬥爭。 我們得要求為演算法的超載負責。

(Applause)

(掌聲)

The era of blind faith in big data must end.

盲目信仰大數據的時代必須要結束。

Thank you very much.

非常謝謝。

(Applause)

(掌聲)