Up until now, our communication with machines has always been limited to conscious and direct forms. Whether it's something simple like turning on the lights with a switch, or even as complex as programming robotics, we have always had to give a command to a machine, or even a series of commands, in order for it to do something for us. Communication between people, on the other hand, is far more complex and a lot more interesting because we take into account so much more than what is explicitly expressed. We observe facial expressions, body language, and we can intuit feelings and emotions from our dialogue with one another. This actually forms a large part of our decision-making process. Our vision is to introduce this whole new realm of human interaction into human-computer interaction so that computers can understand not only what you direct it to do, but it can also respond to your facial expressions and emotional experiences. And what better way to do this than by interpreting the signals naturally produced by our brain, our center for control and experience.

直到現在,我們與機器的溝通 仍局限於 有意識和直接的模式 不論是一些簡單的事情 如用開關開燈 或一些複雜的程式來控制機械人 我們都要給機器輸入一個 甚至一系列的指令 才能命令它執行一些動作 相反的,人與人的溝通 就更加複雜和有趣得多 因為我們會考慮到 言語未表達的言外之意 我們會觀察表情、肢體語言 在對話中我們會用直覺來 感受對方的感覺和情緒 這些都是做決定時 一些重要的因素 我們的願景是引進 全新的人與電腦的互動科技 到人類互動的領域 這麼一來電腦不只可以 明白你指示它所做的事情 而且也會對面部表情 和情緒經歷 作出反應 還有什麼比從大腦的 情感控制中樞直接解譯 大腦產生的電波 來得更好呢?

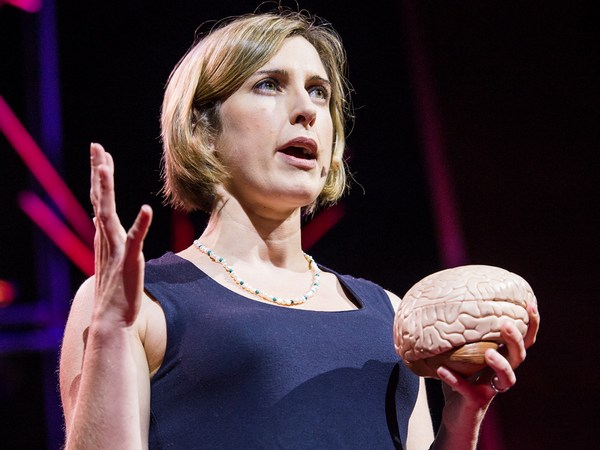

Well, it sounds like a pretty good idea, but this task, as Bruno mentioned, isn't an easy one for two main reasons: First, the detection algorithms. Our brain is made up of billions of active neurons, around 170,000 km of combined axon length. When these neurons interact, the chemical reaction emits an electrical impulse, which can be measured. The majority of our functional brain is distributed over the outer surface layer of the brain, and to increase the area that's available for mental capacity, the brain surface is highly folded. Now this cortical folding presents a significant challenge for interpreting surface electrical impulses. Each individual's cortex is folded differently, very much like a fingerprint. So even though a signal may come from the same functional part of the brain, by the time the structure has been folded, its physical location is very different between individuals, even identical twins. There is no longer any consistency in the surface signals.

這聽起來好像是不錯的主意 但這個任務,正如Bruno所說 並不容易,原因有兩個 第一是大腦的偵查演算法 我們的腦是由 數十億個活躍的神經元所組成 如果把神經細胞的軸索連在一起 大概有十七萬公里 這些神經元互動時 產生的化學作用所發射出的電脈衝 能夠被測量到 大部分功能性腦 是分佈在 大腦的表層 心智能力功能也位於此,為了增加表面積 大腦皮質層有非常多的褶皺 大腦皮質褶皺 對分析電脈衝 帶來一個很大的挑戰 每個人大腦皮質層 的褶皺都不同 就像指紋一樣 因此電脈衝訊息 雖然來自功能腦同樣的區域 但大腦皮質褶皺結構早已形成 在不同的人的大腦裡 即使是雙胞胎 訊息發生位置也不同 大腦皮質層電脈衝訊息 沒有一致性

Our breakthrough was to create an algorithm that unfolds the cortex, so that we can map the signals closer to its source, and therefore making it capable of working across a mass population. The second challenge is the actual device for observing brainwaves. EEG measurements typically involve a hairnet with an array of sensors, like the one that you can see here in the photo. A technician will put the electrodes onto the scalp using a conductive gel or paste and usually after a procedure of preparing the scalp by light abrasion. Now this is quite time consuming and isn't the most comfortable process. And on top of that, these systems actually cost in the tens of thousands of dollars.

我們的突破是建立一個演算法 攤開大腦皮質層 去勘測這些 訊息的原點 繼而把它運用在大眾身上 第二項挑戰是 觀察腦電波的儀器 腦波測量基本上包括 一個有許多感應器的髮網 就像現在圖中所看到的 技術人員會把電極 用導電的膠或漿糊 固定在頭皮上 這個準備程序需要在頭皮製造 輕微的擦傷 這個程序既費時 又不舒服 再加上,這些系統 非常昂貴,得花上數萬美金

So with that, I'd like to invite onstage Evan Grant, who is one of last year's speakers, who's kindly agreed to help me to demonstrate what we've been able to develop.

現在,我邀請Evan Grant 去年的演講者上台 他很樂意 幫忙示範 我們所設計的儀器

(Applause)

(鼓掌)

So the device that you see is a 14-channel, high-fidelity EEG acquisition system. It doesn't require any scalp preparation, no conductive gel or paste. It only takes a few minutes to put on and for the signals to settle. It's also wireless, so it gives you the freedom to move around. And compared to the tens of thousands of dollars for a traditional EEG system, this headset only costs a few hundred dollars. Now on to the detection algorithms. So facial expressions -- as I mentioned before in emotional experiences -- are actually designed to work out of the box with some sensitivity adjustments available for personalization. But with the limited time we have available, I'd like to show you the cognitive suite, which is the ability for you to basically move virtual objects with your mind.

你們所看到的儀器是 有十四個頻道,高傳真的 腦電波訊號擷取系統 不需要任何頭皮準備程序 沒有導電的膠或漿糊 戴上它,等訊號穩定 只要幾分鐘 而且是無線的 它讓你活動自如 比起那些幾萬美元的 傳統腦電波系統 這個頭戴式耳機 只要幾百美金 現在來談談大腦感應演算法 好,面部表情-- 如同之前講到的情緒經驗-- 這套系統有令人意想不到的設計 只要做一些敏感度調整 就可以運用於個人化的使用 但因時間的關係 現在只示範認知的部份 這套系統能夠讓您 只用意念移動虛擬物件

Now, Evan is new to this system, so what we have to do first is create a new profile for him. He's obviously not Joanne -- so we'll "add user." Evan. Okay. So the first thing we need to do with the cognitive suite is to start with training a neutral signal. With neutral, there's nothing in particular that Evan needs to do. He just hangs out. He's relaxed. And the idea is to establish a baseline or normal state for his brain, because every brain is different. It takes eight seconds to do this, and now that that's done, we can choose a movement-based action. So Evan, choose something that you can visualize clearly in your mind.

Evan是第一次接觸這個系統 因此我們要先 建立一個新的檔案 他當然不是Joanne, 所以要增加一個用戶 Evan,好了! 首先要做的是 練習發出一個 中立的訊號 Evan不需要做 什麼特別的事 就這樣放輕鬆 重點是建立一個基準線 或是大腦的正常狀態 因為每個人的腦都不相同 這大概需要八秒的時間 完成了 我們可以選擇一個有動作的活動 Evan,你可選擇一個 在你腦海中可以清楚看到的事情

Evan Grant: Let's do "pull."

讓我們做一個"拉"的動作

Tan Le: Okay, so let's choose "pull." So the idea here now is that Evan needs to imagine the object coming forward into the screen, and there's a progress bar that will scroll across the screen while he's doing that. The first time, nothing will happen, because the system has no idea how he thinks about "pull." But maintain that thought for the entire duration of the eight seconds. So: one, two, three, go. Okay. So once we accept this, the cube is live. So let's see if Evan can actually try and imagine pulling. Ah, good job! (Applause) That's really amazing.

好,點選"拉" 我們現在 需要Evan想像 一件物品在螢幕上 往前移動 他這樣做的時候 螢幕上會出現一個測量棒 第一次沒有任何事情發生 因為系統還不知道他怎麼想像"拉"的動作 在這八秒中 持續想著這個念頭 一、二、三、開始 好了 當我們按了接受 這個方塊就活了起來 讓我們看看Evan 能否真的嘗試想像"拉"的動作 哇! 非常好! (鼓掌) 真是令人驚訝!

(Applause)

(鼓掌)

So we have a little bit of time available, so I'm going to ask Evan to do a really difficult task. And this one is difficult because it's all about being able to visualize something that doesn't exist in our physical world. This is "disappear." So what you want to do -- at least with movement-based actions, we do that all the time, so you can visualize it. But with "disappear," there's really no analogies -- so Evan, what you want to do here is to imagine the cube slowly fading out, okay. Same sort of drill. So: one, two, three, go. Okay. Let's try that. Oh, my goodness. He's just too good. Let's try that again.

我們還有一些時間 我要請Evan 做一些比較困難的動作 這個有點難 因為要想像 在物質界裡不存在的事物 就是 "消失" 就動作而言 因為經常做這些動作,所以能"看見"它 但"消失"沒有任何類似的動作 Evan, 現在請你 想像這個方塊慢慢消失 一樣的練習。 一、二、三、開始 可以了,我們試試吧 我的天啊!他真的是非常厲害 再試一次

EG: Losing concentration.

(EG儀器:) 失去專注力

(Laughter)

(笑聲)

TL: But we can see that it actually works, even though you can only hold it for a little bit of time. As I said, it's a very difficult process to imagine this. And the great thing about it is that we've only given the software one instance of how he thinks about "disappear." As there is a machine learning algorithm in this --

這套系統真的辦到了 雖然只維持 一段很短的時間 我認為想像"消失" 真的是非常困難 這個系統了不起的是 這套軟體只有一次機會 知道Evan是怎麼想像"消失"的 而這部機器便學會了演算它

(Applause)

(鼓掌)

Thank you. Good job. Good job.

謝謝 很棒!很棒!

(Applause)

(鼓掌)

Thank you, Evan, you're a wonderful, wonderful example of the technology.

謝謝,Evan你真的是這項科技 最佳的展示人員

So, as you can see, before, there is a leveling system built into this software so that as Evan, or any user, becomes more familiar with the system, they can continue to add more and more detections, so that the system begins to differentiate between different distinct thoughts. And once you've trained up the detections, these thoughts can be assigned or mapped to any computing platform, application or device.

正如你們所見 這個軟體有一個水準測量系統 Evan或其他使用者 對這個系統越熟悉 就能不斷地增加更多,更多的檢測項目 這個系統就能開始分辨 不同的明顯想法 當你訓練做這些檢測項目 這些念頭、想法就能指定或聯繫到 任何的電腦平台、 應用程式或儀器上

So I'd like to show you a few examples, because there are many possible applications for this new interface. In games and virtual worlds, for example, your facial expressions can naturally and intuitively be used to control an avatar or virtual character. Obviously, you can experience the fantasy of magic and control the world with your mind. And also, colors, lighting, sound and effects can dynamically respond to your emotional state to heighten the experience that you're having, in real time. And moving on to some applications developed by developers and researchers around the world, with robots and simple machines, for example -- in this case, flying a toy helicopter simply by thinking "lift" with your mind.

讓我為你們展示幾個例子 這個新界面有 很多可運用的應用程式 例如在遊戲或虛擬世界 你可以用臉部表情 自然、直覺地 操控遊戲角色或虛擬人物 無庸置疑,你將會親身體驗幻想的魔力 和運用意念來控制世界 顏色,燈光 聲音和音效 也可以不斷地變化來反映你的情緒狀態 即時強化你的感受 現在來看看應用程式 全世界的研發人員發明了 不同的機械人和簡單的機器,例如 這個例子是操作玩具直昇機 只要用意念就可以讓它飛起來

The technology can also be applied to real world applications -- in this example, a smart home. You know, from the user interface of the control system to opening curtains or closing curtains. And of course, also to the lighting -- turning them on or off. And finally, to real life-changing applications, such as being able to control an electric wheelchair. In this example, facial expressions are mapped to the movement commands.

這項科技也可以應用在 實際生活中 看看智能家居的例子 從使用者界面控制系統 來打開 或關上窗簾 當然電燈也可以 開 或關 最後 是應用在改善真實生活 例如能夠控制電動輪椅 這個例子裡 面部表情對應於移動方向的指令

Man: Now blink right to go right. Now blink left to turn back left. Now smile to go straight.

男聲: 現在眨右眼右轉 眨左眼左轉 微笑往前

TL: We really -- Thank you.

TL: 我們真的.... 多謝各位。

(Applause)

(鼓掌)

We are really only scratching the surface of what is possible today, and with the community's input, and also with the involvement of developers and researchers from around the world, we hope that you can help us to shape where the technology goes from here. Thank you so much.

現今我們所做到的只是很小的一部分 有研發團隊的投入 及全世界的研發和 研究人員的參與 我們希望這一項科技能夠 從這裡一路順利發展。謝謝各位。