Today I'm going to talk about technology and society. The Department of Transport estimated that last year 35,000 people died from traffic crashes in the US alone. Worldwide, 1.2 million people die every year in traffic accidents. If there was a way we could eliminate 90 percent of those accidents, would you support it? Of course you would. This is what driverless car technology promises to achieve by eliminating the main source of accidents -- human error.

Danas ću govoriti o tehnologiji i društvu. Odjel za transport je procijenio da je prošle godine 35,000 ljudi umrlo u prometnim nesrećama samo u SAD-u. Diljem svijeta, 1,2 milijuna ljudi umre svake godine u prometnim nesrećama. Kad bi postojao način na koji bismo mogli eliminirati 90 posto tih nesreća, biste li ga podržali? Naravno da biste. Upravo ovo obećava postići tehnologija automobila bez vozača eliminirajući glavni uzrok nesreća -- ljudsku pogrešku.

Now picture yourself in a driverless car in the year 2030, sitting back and watching this vintage TEDxCambridge video.

Sada zamislite sebe u automobilu bez vozača u 2030. godini, kako sjedite otraga i gledate ovaj zastarjeli TEDxCambridge video.

(Laughter)

(Smijeh)

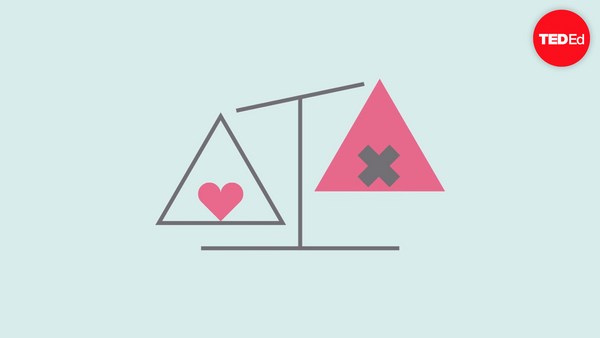

All of a sudden, the car experiences mechanical failure and is unable to stop. If the car continues, it will crash into a bunch of pedestrians crossing the street, but the car may swerve, hitting one bystander, killing them to save the pedestrians. What should the car do, and who should decide? What if instead the car could swerve into a wall, crashing and killing you, the passenger, in order to save those pedestrians? This scenario is inspired by the trolley problem, which was invented by philosophers a few decades ago to think about ethics.

Odjednom, automobilu se dogodio mehanički kvar i ne može se zaustaviti. Ako automobil nastavi voziti, sudarit će se s hrpom pješaka koji prelaze ulicu, ali automobil može i skrenuti, udarajući nekoga tko stoji sa strane, usmrćujući ga kako bi spasio pješake. Što automobil treba učiniti i tko o tome treba odlučiti? Što ako bi, umjesto toga, automobil mogao skrenuti u zid, sudarajući se i ubijajući tebe, putnika, kako bi spasio te pješake? Ovaj scenarij je inspiriran problemom trolejbusa, kojeg su se dosjetili filozofi prije nekoliko desetljeća, da bi promišljali o etici.

Now, the way we think about this problem matters. We may for example not think about it at all. We may say this scenario is unrealistic, incredibly unlikely, or just silly. But I think this criticism misses the point because it takes the scenario too literally. Of course no accident is going to look like this; no accident has two or three options where everybody dies somehow. Instead, the car is going to calculate something like the probability of hitting a certain group of people, if you swerve one direction versus another direction, you might slightly increase the risk to passengers or other drivers versus pedestrians. It's going to be a more complex calculation, but it's still going to involve trade-offs, and trade-offs often require ethics.

Zato je važan način na koji razmišljamo o ovom problemu. Možemo, na primjer, uopće ne razmišljati o njemu. Možemo reći kako je taj scenarij nerealan, iznimno nevjerojatan ili jednostavno blesav. Ali, ja smatram da takva osuda promašuje poantu jer shvaća taj scenarij previše doslovno. Naravno da nijedna nesreća neće izgledati ovako; nijedna nesreća nema dvije ili tri opcije u kojima svi nekako stradaju. Umjesto toga, automobil će izračunati nešto poput vjerojatnosti udaranja u određenu skupinu ljudi, ako skrenete u jednom smjeru naspram drugog smjera, mogli biste neznatno povećati rizik za putnike ili druge vozače naspram pješaka. To će biti složenija kalkulacija, ali će i dalje uključivati kompromise, a za kompromis je često potrebna moralna prosudba.

We might say then, "Well, let's not worry about this. Let's wait until technology is fully ready and 100 percent safe." Suppose that we can indeed eliminate 90 percent of those accidents, or even 99 percent in the next 10 years. What if eliminating the last one percent of accidents requires 50 more years of research? Should we not adopt the technology? That's 60 million people dead in car accidents if we maintain the current rate. So the point is, waiting for full safety is also a choice, and it also involves trade-offs.

Možemo onda reći „Pa, nemojmo se brinuti oko toga. Pričekajmo dok tehnologija ne bude potpuno spremna i 100 posto sigurna.“ Pretpostavimo da zaista možemo uspjeti eliminirati 90 posto tih nesreća, ili čak 99 posto u idućih 10 godina. Što ako uklanjanje tog zadnjeg postotka nesreća zahtijeva još dodatnih 50 godina istraživanja? Zar ne trebamo usvojiti tehnologiju? To je 60 milijuna ljudi poginulih u automobilskim nesrećama, ako zadržimo trenutnu stopu. Stoga poanta je da, čekanje potpune sigurnosti je također izbor i isto uključuje kompromise.

People online on social media have been coming up with all sorts of ways to not think about this problem. One person suggested the car should just swerve somehow in between the passengers --

Ljudi prisutni na društvenim mrežama pronalaze svakakve načine kako ne misliti o ovom problemu. Jedna je osoba predložila da automobil samo skrene nekako između putnika --

(Laughter)

(Smijeh)

and the bystander. Of course if that's what the car can do, that's what the car should do. We're interested in scenarios in which this is not possible. And my personal favorite was a suggestion by a blogger to have an eject button in the car that you press --

i promatrača. Naravno, ako to automobil može učiniti, onda to i treba učiniti. Nas zanimaju scenariji u kojima ovo nije moguće. I moj osobni favorit bio je prijedlog blogera da u automobilu imamo gumb za izbacivanje koji pritisnemo --

(Laughter)

(Smijeh)

just before the car self-destructs.

netom prije no što se automobil sam uništi.

(Laughter)

(Smijeh)

So if we acknowledge that cars will have to make trade-offs on the road, how do we think about those trade-offs, and how do we decide? Well, maybe we should run a survey to find out what society wants, because ultimately, regulations and the law are a reflection of societal values.

Dakle, ako priznamo da će automobili morati raditi kompromise na cesti, kako ćemo razmišljati o tim kompromisima i kako ćemo donijeti odluku? Pa, možda bismo trebali provesti anketu da saznamo što društvo želi, jer naposljetku, regulacije i zakon su odraz društvenih vrijednosti.

So this is what we did. With my collaborators, Jean-François Bonnefon and Azim Shariff, we ran a survey in which we presented people with these types of scenarios. We gave them two options inspired by two philosophers: Jeremy Bentham and Immanuel Kant. Bentham says the car should follow utilitarian ethics: it should take the action that will minimize total harm -- even if that action will kill a bystander and even if that action will kill the passenger. Immanuel Kant says the car should follow duty-bound principles, like "Thou shalt not kill." So you should not take an action that explicitly harms a human being, and you should let the car take its course even if that's going to harm more people.

Stoga smo učinili ovo. S mojim suradnicima, Jean-Françoisem Bonnefonom i Azimom Shariffom, proveli smo istraživanje u kojem smo predstavili ljudima ove vrste scenarija. Dali smo im dvije mogućnosti inspirirane dvama filozofima: Jeremyem Benthamom i Immanuelom Kantom. Bentham kaže da automobil treba pratiti utilitarnu etiku: treba poduzeti akciju koja će minimalizirati totalnu štetu -- čak i ako će ta akcija ubiti promatrača te čak i ako će ta akcija ubiti putnika. Immanuel Kant kaže da automobil treba pratiti moralna načela, poput „Ne ubij.“ Zato ne bismo trebali poduzeti akciju koja eksplicitno šteti ljudskom biću i trebali bismo pustiti da automobil ide svojim putem, čak i ako će to ozlijediti više ljudi.

What do you think? Bentham or Kant? Here's what we found. Most people sided with Bentham. So it seems that people want cars to be utilitarian, minimize total harm, and that's what we should all do. Problem solved. But there is a little catch. When we asked people whether they would purchase such cars, they said, "Absolutely not."

Što vi mislite? Bentham ili Kant? Evo što smo mi pronašli. Većina ljudi je stala na stranu Benthama. Stoga se čini kako većina želi da automobili budu korisni, da smanje totalnu štetu i to bismo svi trebali činiti. Riješen problem. Ali, postoji mala kvaka. Kad smo pitali ljude bi li kupili takve automobile, odgovorili su: „ Apsolutno ne.“

(Laughter)

(Smijeh)

They would like to buy cars that protect them at all costs, but they want everybody else to buy cars that minimize harm.

Oni bi htjeli kupiti automobile koji njih štite pod svaku cijenu, ali žele da svi ostali kupe automobile koji minimaliziraju štetu.

(Laughter)

(Smijeh)

We've seen this problem before. It's called a social dilemma. And to understand the social dilemma, we have to go a little bit back in history. In the 1800s, English economist William Forster Lloyd published a pamphlet which describes the following scenario. You have a group of farmers -- English farmers -- who are sharing a common land for their sheep to graze. Now, if each farmer brings a certain number of sheep -- let's say three sheep -- the land will be rejuvenated, the farmers are happy, the sheep are happy, everything is good. Now, if one farmer brings one extra sheep, that farmer will do slightly better, and no one else will be harmed. But if every farmer made that individually rational decision, the land will be overrun, and it will be depleted to the detriment of all the farmers, and of course, to the detriment of the sheep.

Već prije smo se sreli s ovim problemom. Zove se društvena dilema. I da bismo razumjeli društvena dilemu, moramo se vratiti malo u prošlost. U 1800-ima, engleski ekonomist William Forster Lloyd objavio je pamflet koji opisuje idući scenarij. Imate grupu farmera, engleskih farmera, koji dijele zajedničko zemljište za ispašu njihovih ovaca. Sada, ako svaki farmer dovodi određeni broj ovaca -- recimo tri ovce -- zemljište će biti pomlađeno, farmeri sretni, ovce sretne, sve je dobro. Sada, ako jedan farmer dovede jednu ovcu više, tom će farmeru ići malo bolje i nitko drugi neće biti oštećen. Međutim, ako svaki farmer donese tu individualno racionalnu odluku, zemljište će postati pretrpano i bit će istrošeno na štetu svih farmera i naravno, na štetu ovaca.

We see this problem in many places: in the difficulty of managing overfishing, or in reducing carbon emissions to mitigate climate change. When it comes to the regulation of driverless cars, the common land now is basically public safety -- that's the common good -- and the farmers are the passengers or the car owners who are choosing to ride in those cars. And by making the individually rational choice of prioritizing their own safety, they may collectively be diminishing the common good, which is minimizing total harm. It's called the tragedy of the commons, traditionally, but I think in the case of driverless cars, the problem may be a little bit more insidious because there is not necessarily an individual human being making those decisions. So car manufacturers may simply program cars that will maximize safety for their clients, and those cars may learn automatically on their own that doing so requires slightly increasing risk for pedestrians. So to use the sheep metaphor, it's like we now have electric sheep that have a mind of their own.

Možemo vidjeti ovaj problem u raznim područjima: u poteškoćama u vođenju pretjeranog ribolova ili u smanjenju emisija ugljika za ublažavanje klimatskih promjena. Kada je u pitanju regulacija automobila bez vozača, zajedničko je, zapravo, javna sigurnost -- to je opće dobro -- a farmeri su putnici ili vlasnici automobila koji odabiru voziti se u tim automobilima. I odabirući individualno racionalni odabir davanja prioriteta vlastitoj sigurnosti, oni bi kolektivno mogli umanjiti opće dobro, koje je smanjene totalne štete. To se naziva tragedija zajedništva, tradicionalno, ali ja mislim da u slučaju automobila bez vozača, problem je možda malo više podmukao jer ne postoji nužno individualno ljudsko biće koje donosi te odluke. Proizvođači automobila mogu jednostavno programirati automobile tako da maksimalno povećaju sigurnost svojih klijenata te bi takvi automobili mogli automatski samostalno naučiti da to zahtijeva povećanje rizika za pješake. Da upotrijebim metaforu s ovcom, to je kao da sada imamo električnu ovcu s vlastitim umom.

(Laughter)

(Smijeh)

And they may go and graze even if the farmer doesn't know it.

I one mogu otići i jesti bez da uopće njihov farmer to zna.

So this is what we may call the tragedy of the algorithmic commons, and if offers new types of challenges. Typically, traditionally, we solve these types of social dilemmas using regulation, so either governments or communities get together, and they decide collectively what kind of outcome they want and what sort of constraints on individual behavior they need to implement. And then using monitoring and enforcement, they can make sure that the public good is preserved. So why don't we just, as regulators, require that all cars minimize harm? After all, this is what people say they want. And more importantly, I can be sure that as an individual, if I buy a car that may sacrifice me in a very rare case, I'm not the only sucker doing that while everybody else enjoys unconditional protection.

Dakle, ovo bismo mogli nazvati tragedijom zajedničkog algoritma te nam ona nudi nove vrste izazova. Obično, tradicionalno, ove vrste društvenih dilema rješavamo koristeći propise, stoga se sastanu ili vlade ili zajednice i kolektivno odluče kakav ishod žele i kakva ograničenja individualnog ponašanja trebaju provoditi. Te tada, koristeći praćenje i provedbu, mogu osigurati očuvanje javne sigurnosti. Stoga, zašto mi ne bismo samo, kao regulatori, zahtijevali da svi automobili potpuno smanje štetu? Uostalom, to je ono što ljudi kažu da žele. I još važnije, mogu biti siguran da kao pojedinac, ukoliko kupim automobil koji bi me mogao žrtvovati u vrlo rijetkom slučaju, nisam jedina naivčina čineći to dok svi ostali uživaju bezuvjetnu zaštitu.

In our survey, we did ask people whether they would support regulation and here's what we found. First of all, people said no to regulation; and second, they said, "Well if you regulate cars to do this and to minimize total harm, I will not buy those cars." So ironically, by regulating cars to minimize harm, we may actually end up with more harm because people may not opt into the safer technology even if it's much safer than human drivers.

U našoj anketi, pitali smo ljude bi li podržali propise i evo što smo saznali. Prvo, ljudi su rekli ne propisima; i drugo, rekli su: „Pa, ako regulirate automobile da to čine i da potpuno smanje štetu, ja neću kupiti te automobile.“ Dakle, ironično, regulirajući automobile u svrhu potpunog smanjenja štete, možemo zapravo završiti s više štete jer se možda ljudi neće odlučiti za sigurniju tehnologiju, čak i ako je puno sigurnija od ljudskih vozača.

I don't have the final answer to this riddle, but I think as a starting point, we need society to come together to decide what trade-offs we are comfortable with and to come up with ways in which we can enforce those trade-offs.

Nemam konačan odgovor na ovu zagonetku, ali mislim da, za početak, trebamo društvo da se zajedno okupi kako bismo odlučili s kojim kompromisima se slažemo i kako bismo osmislili načine donošenja tih kompromisa.

As a starting point, my brilliant students, Edmond Awad and Sohan Dsouza, built the Moral Machine website, which generates random scenarios at you -- basically a bunch of random dilemmas in a sequence where you have to choose what the car should do in a given scenario. And we vary the ages and even the species of the different victims. So far we've collected over five million decisions by over one million people worldwide from the website. And this is helping us form an early picture of what trade-offs people are comfortable with and what matters to them -- even across cultures. But more importantly, doing this exercise is helping people recognize the difficulty of making those choices and that the regulators are tasked with impossible choices. And maybe this will help us as a society understand the kinds of trade-offs that will be implemented ultimately in regulation.

Za početak, moji sjajni studenti, Edmond Awad i Sohan Dsouza, napravili su internetsku stranicu Moral Machine, koja vam postavlja nasumične događaje -- obično gomilu nasumičnih dilema u nizu, gdje vi trebate odlučiti što bi automobil trebao napraviti u određenoj situaciji. U ponudi imamo žrtve raznih dobi i vrsta. Dosad smo prikupili više od pet milijuna odluka od preko milijun ljudi diljem svijeta putem te internetske stranice. Ovo nam od početka pomaže u razumijevanju s kakvim kompromisima se ljudi slažu te što je njima važno -- čak i u različitim kulturama. No, važnije od toga, ljudima se time pomaže u shvaćanju težine donošenja tih odluka te da su oni koji donose odluke zaduženi za nemoguće izbore. I možda će upravo ovo pomoći nama kao društvu u razumijevanju kompromisa koji će biti ostvareni u konačnici pri donošenju propisa.

And indeed, I was very happy to hear that the first set of regulations that came from the Department of Transport -- announced last week -- included a 15-point checklist for all carmakers to provide, and number 14 was ethical consideration -- how are you going to deal with that. We also have people reflect on their own decisions by giving them summaries of what they chose. I'll give you one example -- I'm just going to warn you that this is not your typical example, your typical user. This is the most sacrificed and the most saved character for this person.

I zaista, bio sam sretan saznajući da je prvi skup propisa iz Odjela za transport -- objavljen prošli tjedan -- obuhvaćao kontrolni popis of 15 točaka za sve proizvođače automobila te da je broj 14 uzimao u obzir etiku -- kako ćete se vi nositi s tim. Također, potičemo ljude da promišljaju o vlastitim odlukama tako što im dajemo sažetak njihovih odabira. Dat ću vam jedan primjer -- samo ću vas upozoriti da ovo nije tipičan primjer, tipičan korisnik. Ovo je najviše žrtvovan i najviše spašen lik za ovu osobu.

(Laughter)

(Smijeh)

Some of you may agree with him, or her, we don't know. But this person also seems to slightly prefer passengers over pedestrians in their choices and is very happy to punish jaywalking.

Neki od vas bi se mogli složiti s njim, ili s njom, ne znamo. No također, čini se da ova osoba ipak malo više preferira putnike nego pješake u svojim odlukama te da vrlo rado kažnjava neoprezno prelaženje ulice.

(Laughter)

(Smijeh)

So let's wrap up. We started with the question -- let's call it the ethical dilemma -- of what the car should do in a specific scenario: swerve or stay? But then we realized that the problem was a different one. It was the problem of how to get society to agree on and enforce the trade-offs they're comfortable with. It's a social dilemma.

Dakle, zaključimo. Započeli smo pitanjem -- nazovimo to moralnom dilemom -- kako bi automobil trebao postupiti u određenoj situaciji: skrenuti ili ostati? Ali onda smo shvatili da je problem bio u nečem drugom. U tome kako pridobiti društvo da donese zajedničku odluku i provede kompromise s kojim se slaže. To je društvena dilema.

In the 1940s, Isaac Asimov wrote his famous laws of robotics -- the three laws of robotics. A robot may not harm a human being, a robot may not disobey a human being, and a robot may not allow itself to come to harm -- in this order of importance. But after 40 years or so and after so many stories pushing these laws to the limit, Asimov introduced the zeroth law which takes precedence above all, and it's that a robot may not harm humanity as a whole. I don't know what this means in the context of driverless cars or any specific situation, and I don't know how we can implement it, but I think that by recognizing that the regulation of driverless cars is not only a technological problem but also a societal cooperation problem, I hope that we can at least begin to ask the right questions.

1940., Isaac Asimov je napisao svoje slavne zakone robotike -- tri zakona robotike. Robot nikada ne smije naškoditi ljudskom biću, robot nikada ne smije ne poslušati ljudsko biće i robot nikada ne smije dopustiti sebi štetu -- tim redoslijedom važnosti. No, nakon otprilike 40 godina i nakon toliko slučajeva koji guraju ove zakone do krajnjih granica, Asimov je predstavio nulti zakon, koji ima prednost spram svih ostalih zakona i glasi da robot nikada ne smije naškoditi čovječnosti u cijelosti. Ne znam što ovo znači u kontekstu automobila bez vozača ili u bilo kojoj specifičnoj situaciji, i ne znam kako bismo to mogli primijeniti, ali smatram da prepoznavanjem kako regulacija automobila bez vozača nije samo problem tehnologije već i čitavog društva i njegove suradnje, nadam se, možemo barem početi postavljati prava pitanja.

Thank you.

Hvala vam.

(Applause)

(Pljesak)