Let me show you something.

我先来给你们看点东西。

(Video) Girl: Okay, that's a cat sitting in a bed. The boy is petting the elephant. Those are people that are going on an airplane. That's a big airplane.

(视频)女孩: 好吧,这是只猫,坐在床上。 一个男孩摸着一头大象。 那些人正准备登机。 那是架大飞机。

Fei-Fei Li: This is a three-year-old child describing what she sees in a series of photos. She might still have a lot to learn about this world, but she's already an expert at one very important task: to make sense of what she sees. Our society is more technologically advanced than ever. We send people to the moon, we make phones that talk to us or customize radio stations that can play only music we like. Yet, our most advanced machines and computers still struggle at this task. So I'm here today to give you a progress report on the latest advances in our research in computer vision, one of the most frontier and potentially revolutionary technologies in computer science.

李飞飞: 这是一个三岁的小孩 在讲述她从一系列照片里看到的东西。 对这个世界, 她也许还有很多要学的东西, 但在一个重要的任务上, 她已经是专家了: 去理解她所看到的东西。 我们的社会已经在科技上 取得了前所未有的进步。 我们把人送上月球, 我们制造出可以与我们对话的手机, 或者订制一个音乐电台, 播放的全是我们喜欢的音乐。 然而,哪怕是我们最先进的机器和电脑 也会在这个问题上犯难。 所以今天我在这里, 向大家做个进度汇报: 关于我们在计算机 视觉方面最新的研究进展。 这是计算机科学领域最前沿的、 具有革命性潜力的科技。

Yes, we have prototyped cars that can drive by themselves, but without smart vision, they cannot really tell the difference between a crumpled paper bag on the road, which can be run over, and a rock that size, which should be avoided. We have made fabulous megapixel cameras, but we have not delivered sight to the blind. Drones can fly over massive land, but don't have enough vision technology to help us to track the changes of the rainforests. Security cameras are everywhere, but they do not alert us when a child is drowning in a swimming pool. Photos and videos are becoming an integral part of global life. They're being generated at a pace that's far beyond what any human, or teams of humans, could hope to view, and you and I are contributing to that at this TED. Yet our most advanced software is still struggling at understanding and managing this enormous content. So in other words, collectively as a society, we're very much blind, because our smartest machines are still blind.

是的,我们现在已经有了 具备自动驾驶功能的原型车, 但是如果没有敏锐的视觉, 它们就不能真正区分出 地上摆着的是一个压扁的纸袋, 可以被轻易压过, 还是一块相同体积的石头, 应该避开。 我们已经造出了超高清的相机, 但我们仍然无法把 这些画面传递给盲人。 我们的无人机可以飞跃广阔的土地, 却没有足够的视觉技术 去帮我们追踪热带雨林的变化。 安全摄像头到处都是, 但当有孩子在泳池里溺水时 它们无法向我们报警。 照片和视频,已经成为 全人类生活里不可缺少的部分。 它们以极快的速度被创造出来, 以至于没有任何人,或者团体, 能够完全浏览这些内容, 而你我正参与其中的这场TED, 也为之添砖加瓦。 直到现在,我们最先进的 软件也依然为之犯难: 该怎么理解和处理 这些数量庞大的内容? 所以换句话说, 在作为集体的这个社会里, 我们依然非常茫然,因为我们最智能的机器 依然有视觉上的缺陷。

"Why is this so hard?" you may ask. Cameras can take pictures like this one by converting lights into a two-dimensional array of numbers known as pixels, but these are just lifeless numbers. They do not carry meaning in themselves. Just like to hear is not the same as to listen, to take pictures is not the same as to see, and by seeing, we really mean understanding. In fact, it took Mother Nature 540 million years of hard work to do this task, and much of that effort went into developing the visual processing apparatus of our brains, not the eyes themselves. So vision begins with the eyes, but it truly takes place in the brain.

”为什么这么困难?“你也许会问。 照相机可以像这样获得照片: 它把采集到的光线转换成 二维数字矩阵来存储 ——也就是“像素”, 但这些仍然是死板的数字。 它们自身并不携带任何意义。 就像”听到“和”听“完全不同, ”拍照“和”看“也完全不同。 通过“看”, 我们实际上是“理解”了这个画面。 事实上,大自然经过了5亿4千万年的努力 才完成了这个工作, 而这努力中更多的部分 是用在进化我们的大脑内 用于视觉处理的器官, 而不是眼睛本身。 所以"视觉”从眼睛采集信息开始, 但大脑才是它真正呈现意义的地方。

So for 15 years now, starting from my Ph.D. at Caltech and then leading Stanford's Vision Lab, I've been working with my mentors, collaborators and students to teach computers to see. Our research field is called computer vision and machine learning. It's part of the general field of artificial intelligence. So ultimately, we want to teach the machines to see just like we do: naming objects, identifying people, inferring 3D geometry of things, understanding relations, emotions, actions and intentions. You and I weave together entire stories of people, places and things the moment we lay our gaze on them.

所以15年来, 从我进入加州理工学院攻读Ph.D. 到后来领导 斯坦福大学的视觉实验室, 我一直在和我的导师、 合作者和学生们一起 教计算机如何去“看”。 我们的研究领域叫做 "计算机视觉与机器学习"。 这是AI(人工智能)领域的一个分支。 最终,我们希望能教会机器 像我们一样看见事物: 识别物品、辨别不同的人、 推断物体的立体形状、 理解事物的关联、 人的情绪、动作和意图。 像你我一样,只凝视一个画面一眼 就能理清整个故事中的人物、地点、事件。

The first step towards this goal is to teach a computer to see objects, the building block of the visual world. In its simplest terms, imagine this teaching process as showing the computers some training images of a particular object, let's say cats, and designing a model that learns from these training images. How hard can this be? After all, a cat is just a collection of shapes and colors, and this is what we did in the early days of object modeling. We'd tell the computer algorithm in a mathematical language that a cat has a round face, a chubby body, two pointy ears, and a long tail, and that looked all fine. But what about this cat? (Laughter) It's all curled up. Now you have to add another shape and viewpoint to the object model. But what if cats are hidden? What about these silly cats? Now you get my point. Even something as simple as a household pet can present an infinite number of variations to the object model, and that's just one object.

实现这一目标的第一步是 教计算机看到“对象”(物品), 这是建造视觉世界的基石。 在这个最简单的任务里, 想象一下这个教学过程: 给计算机看一些特定物品的训练图片, 比如说猫, 并让它从这些训练图片中, 学习建立出一个模型来。 这有多难呢? 不管怎么说,一只猫只是一些 形状和颜色拼凑起来的图案罢了, 比如这个就是我们 最初设计的抽象模型。 我们用数学的语言, 告诉计算机这种算法: “猫”有着圆脸、胖身子、 两个尖尖的耳朵,还有一条长尾巴, 这(算法)看上去挺好的。 但如果遇到这样的猫呢? (笑) 它整个蜷缩起来了。 现在你不得不加入一些别的形状和视角 来描述这个物品模型。 但如果猫是藏起来的呢? 再看看这些傻猫呢? 你现在知道了吧。 即使那些事物简单到 只是一只家养的宠物, 都可以出呈现出无限种变化的外观模型, 而这还只是“一个”对象的模型。

So about eight years ago, a very simple and profound observation changed my thinking. No one tells a child how to see, especially in the early years. They learn this through real-world experiences and examples. If you consider a child's eyes as a pair of biological cameras, they take one picture about every 200 milliseconds, the average time an eye movement is made. So by age three, a child would have seen hundreds of millions of pictures of the real world. That's a lot of training examples. So instead of focusing solely on better and better algorithms, my insight was to give the algorithms the kind of training data that a child was given through experiences in both quantity and quality.

所以大概在8年前, 一个非常简单、有冲击力的 观察改变了我的想法。 没有人教过婴儿怎么“看”, 尤其是在他们还很小的时候。 他们是从真实世界的经验 和例子中学到这个的。 如果你把孩子的眼睛 都看作是生物照相机, 那他们每200毫秒就拍一张照。 ——这是眼球转动一次的平均时间。 所以到3岁大的时候,一个孩子已经看过了 上亿张的真实世界照片。 这种“训练照片”的数量是非常大的。 所以,与其孤立地关注于 算法的优化、再优化, 我的关注点放在了给算法 提供像那样的训练数据 ——那些,婴儿们从经验中获得的 质量和数量都极其惊人的训练照片。 一旦我们知道了这个,

Once we know this, we knew we needed to collect a data set that has far more images than we have ever had before, perhaps thousands of times more, and together with Professor Kai Li at Princeton University, we launched the ImageNet project in 2007. Luckily, we didn't have to mount a camera on our head and wait for many years. We went to the Internet, the biggest treasure trove of pictures that humans have ever created. We downloaded nearly a billion images and used crowdsourcing technology like the Amazon Mechanical Turk platform to help us to label these images. At its peak, ImageNet was one of the biggest employers of the Amazon Mechanical Turk workers: together, almost 50,000 workers from 167 countries around the world helped us to clean, sort and label nearly a billion candidate images. That was how much effort it took to capture even a fraction of the imagery a child's mind takes in in the early developmental years.

我们就明白自己需要收集的数据集, 必须比我们曾有过的任何数据库都丰富 ——可能要丰富数千倍。 因此,通过与普林斯顿大学的 Kai Li教授合作, 我们在2007年发起了 ImageNet(图片网络)计划。 幸运的是,我们不必在自己脑子里 装上一台照相机,然后等它拍很多年。 我们运用了互联网, 这个由人类创造的 最大的图片宝库。 我们下载了接近10亿张图片 并利用众包技术(利用互联网分配工作、发现创意或 解决技术问题),像“亚马逊土耳其机器人”这样的平台 来帮我们标记这些图片。 在高峰期时,ImageNet是「亚马逊土耳其机器人」 这个平台上最大的雇主之一: 来自世界上167个国家的 接近5万个工作者,在一起工作 帮我们筛选、排序、标记了 接近10亿张备选照片。 这就是我们为这个计划投入的精力, 去捕捉,一个婴儿可能在他早期发育阶段 获取的”一小部分“图像。

In hindsight, this idea of using big data to train computer algorithms may seem obvious now, but back in 2007, it was not so obvious. We were fairly alone on this journey for quite a while. Some very friendly colleagues advised me to do something more useful for my tenure, and we were constantly struggling for research funding. Once, I even joked to my graduate students that I would just reopen my dry cleaner's shop to fund ImageNet. After all, that's how I funded my college years.

事后我们再来看,这个利用大数据来训练 计算机算法的思路,也许现在看起来很普通, 但回到2007年时,它就不那么寻常了。 我们在这段旅程上孤独地前行了很久。 一些很友善的同事建议我 做一些更有用的事来获得终身教职, 而且我们也不断地为项目的研究经费发愁。 有一次,我甚至对 我的研究生学生开玩笑说: 我要重新回去开我的干洗店 来赚钱资助ImageNet了。 ——毕竟,我的大学时光 就是靠这个资助的。 所以我们仍然在继续着。

So we carried on. In 2009, the ImageNet project delivered a database of 15 million images across 22,000 classes of objects and things organized by everyday English words. In both quantity and quality, this was an unprecedented scale. As an example, in the case of cats, we have more than 62,000 cats of all kinds of looks and poses and across all species of domestic and wild cats. We were thrilled to have put together ImageNet, and we wanted the whole research world to benefit from it, so in the TED fashion, we opened up the entire data set to the worldwide research community for free. (Applause)

在2009年,ImageNet项目诞生了—— 一个含有1500万张照片的数据库, 涵盖了22000种物品。 这些物品是根据日常英语单词 进行分类组织的。 无论是在质量上还是数量上, 这都是一个规模空前的数据库。 举个例子,在"猫"这个对象中, 我们有超过62000只猫 长相各异,姿势五花八门, 而且涵盖了各种品种的家猫和野猫。 我们对ImageNet收集到的图片 感到异常兴奋, 而且我们希望整个研究界能从中受益, 所以以一种和TED一样的方式, 我们公开了整个数据库, 免费提供给全世界的研究团体。 (掌声)

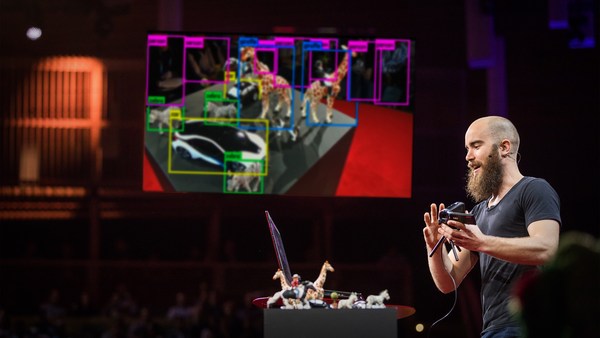

Now that we have the data to nourish our computer brain, we're ready to come back to the algorithms themselves. As it turned out, the wealth of information provided by ImageNet was a perfect match to a particular class of machine learning algorithms called convolutional neural network, pioneered by Kunihiko Fukushima, Geoff Hinton, and Yann LeCun back in the 1970s and '80s. Just like the brain consists of billions of highly connected neurons, a basic operating unit in a neural network is a neuron-like node. It takes input from other nodes and sends output to others. Moreover, these hundreds of thousands or even millions of nodes are organized in hierarchical layers, also similar to the brain. In a typical neural network we use to train our object recognition model, it has 24 million nodes, 140 million parameters, and 15 billion connections. That's an enormous model. Powered by the massive data from ImageNet and the modern CPUs and GPUs to train such a humongous model, the convolutional neural network blossomed in a way that no one expected. It became the winning architecture to generate exciting new results in object recognition. This is a computer telling us this picture contains a cat and where the cat is. Of course there are more things than cats, so here's a computer algorithm telling us the picture contains a boy and a teddy bear; a dog, a person, and a small kite in the background; or a picture of very busy things like a man, a skateboard, railings, a lampost, and so on. Sometimes, when the computer is not so confident about what it sees, we have taught it to be smart enough to give us a safe answer instead of committing too much, just like we would do, but other times our computer algorithm is remarkable at telling us what exactly the objects are, like the make, model, year of the cars.

那么现在,我们有了用来 培育计算机大脑的数据库, 我们可以回到”算法“本身上来了。 因为ImageNet的横空出世,它提供的信息财富 完美地适用于一些特定类别的机器学习算法, 称作“卷积神经网络”, 最早由Kunihiko Fukushima,Geoff Hinton, 和Yann LeCun在上世纪七八十年代开创。 就像大脑是由上十亿的 紧密联结的神经元组成, 神经网络里最基础的运算单元 也是一个“神经元式”的节点。 每个节点从其它节点处获取输入信息, 然后把自己的输出信息再交给另外的节点。 此外,这些成千上万、甚至上百万的节点 都被按等级分布于不同层次, 就像大脑一样。 在一个我们用来训练“对象识别模型”的 典型神经网络里, 有着2400万个节点,1亿4千万个参数, 和150亿个联结。 这是一个庞大的模型。 借助ImageNet提供的巨大规模数据支持, 通过大量最先进的CPU和GPU, 来训练这些堆积如山的模型, “卷积神经网络” 以难以想象的方式蓬勃发展起来。 它成为了一个成功体系, 在对象识别领域, 产生了激动人心的新成果。 这张图,是计算机在告诉我们: 照片里有一只猫、 还有猫所在的位置。 当然不止有猫了, 所以这是计算机算法在告诉我们 照片里有一个男孩,和一个泰迪熊; 一只狗,一个人,和背景里的小风筝; 或者是一张拍摄于闹市的照片 比如人、滑板、栏杆、灯柱…等等。 有时候,如果计算机 不是很确定它看到的是什么, 我们还教它用足够聪明的方式 给出一个“安全”的答案,而不是“言多必失” ——就像人类面对这类问题时一样。 但在其他时候,我们的计算机 算法厉害到可以告诉我们 关于对象的更确切的信息, 比如汽车的品牌、型号、年份。

We applied this algorithm to millions of Google Street View images across hundreds of American cities, and we have learned something really interesting: first, it confirmed our common wisdom that car prices correlate very well with household incomes. But surprisingly, car prices also correlate well with crime rates in cities, or voting patterns by zip codes.

我们在上百万张谷歌街景照片中 应用了这一算法, 那些照片涵盖了上百个美国城市。 我们从中发现一些有趣的事: 首先,它证实了我们的一些常识: 汽车的价格,与家庭收入 呈现出明显的正相关。 但令人惊奇的是,汽车价格与犯罪率 也呈现出明显的正相关性, 以上结论是基于城市、或投票的 邮编区域进行分析的结果。

So wait a minute. Is that it? Has the computer already matched or even surpassed human capabilities? Not so fast. So far, we have just taught the computer to see objects. This is like a small child learning to utter a few nouns. It's an incredible accomplishment, but it's only the first step. Soon, another developmental milestone will be hit, and children begin to communicate in sentences. So instead of saying this is a cat in the picture, you already heard the little girl telling us this is a cat lying on a bed.

那么等一下,这就是全部成果了吗? 计算机是不是已经达到, 或者甚至超过了人类的能力? ——还没有那么快。 目前为止,我们还只是 教会了计算机去看对象。 这就像是一个小宝宝学会说出几个名词。 这是一项难以置信的成就, 但这还只是第一步。 很快,我们就会到达 发展历程的另一个里程碑: 这个小孩会开始用“句子”进行交流。 所以不止是说这张图里有只“猫”, 你在开头已经听到小妹妹 告诉我们“这只猫是坐在床上的”。

So to teach a computer to see a picture and generate sentences, the marriage between big data and machine learning algorithm has to take another step. Now, the computer has to learn from both pictures as well as natural language sentences generated by humans. Just like the brain integrates vision and language, we developed a model that connects parts of visual things like visual snippets with words and phrases in sentences.

为了教计算机看懂图片并生成句子, “大数据”和“机器学习算法”的结合 需要更进一步。 现在,计算机需要从图片和人类创造的 自然语言句子中同时进行学习。 就像我们的大脑, 把视觉现象和语言融合在一起, 我们开发了一个模型, 可以把一部分视觉信息,像视觉片段, 与语句中的文字、短语联系起来。

About four months ago, we finally tied all this together and produced one of the first computer vision models that is capable of generating a human-like sentence when it sees a picture for the first time. Now, I'm ready to show you what the computer says when it sees the picture that the little girl saw at the beginning of this talk.

大约4个月前, 我们最终把所有技术结合在了一起, 创造了第一个“计算机视觉模型”, 它在看到图片的第一时间,就有能力生成 类似人类语言的句子。 现在,我准备给你们看看 计算机看到图片时会说些什么 ——还是那些在演讲开头给小女孩看的图片。

(Video) Computer: A man is standing next to an elephant. A large airplane sitting on top of an airport runway.

(视频)计算机: “一个男人站在一头大象旁边。” “一架大飞机停在机场跑道一端。”

FFL: Of course, we're still working hard to improve our algorithms, and it still has a lot to learn. (Applause)

李飞飞: 当然,我们还在努力改善我们的算法, 它还有很多要学的东西。 (掌声)

And the computer still makes mistakes.

计算机还是会犯很多错误的。

(Video) Computer: A cat lying on a bed in a blanket.

(视频)计算机: “一只猫躺在床上的毯子上。”

FFL: So of course, when it sees too many cats, it thinks everything might look like a cat.

李飞飞:所以…当然——如果它看过太多种的猫, 它就会觉得什么东西都长得像猫……

(Video) Computer: A young boy is holding a baseball bat. (Laughter)

(视频)计算机: “一个小男孩拿着一根棒球棍。” (笑声)

FFL: Or, if it hasn't seen a toothbrush, it confuses it with a baseball bat.

李飞飞:或者…如果它从没见过牙刷, 它就分不清牙刷和棒球棍的区别。

(Video) Computer: A man riding a horse down a street next to a building. (Laughter)

(视频)计算机: “建筑旁的街道上有一个男人骑马经过。” (笑声)

FFL: We haven't taught Art 101 to the computers.

李飞飞:我们还没教它Art 101 (美国大学艺术基础课)。

(Video) Computer: A zebra standing in a field of grass.

(视频)计算机: “一只斑马站在一片草原上。”

FFL: And it hasn't learned to appreciate the stunning beauty of nature like you and I do.

李飞飞:它还没学会像你我一样 欣赏大自然里的绝美景色。 所以,这是一条漫长的道路。

So it has been a long journey. To get from age zero to three was hard. The real challenge is to go from three to 13 and far beyond. Let me remind you with this picture of the boy and the cake again. So far, we have taught the computer to see objects or even tell us a simple story when seeing a picture.

将一个孩子从出生培养到3岁是很辛苦的。 而真正的挑战是从3岁到13岁的过程中, 而且远远不止于此。 让我再给你们看看这张 关于小男孩和蛋糕的图。 目前为止, 我们已经教会计算机“看”对象, 或者甚至基于图片, 告诉我们一个简单的故事。 (视频)计算机: ”一个人坐在放蛋糕的桌子旁。“

(Video) Computer: A person sitting at a table with a cake.

李飞飞:但图片里还有更多信息 ——远不止一个人和一个蛋糕。

FFL: But there's so much more to this picture than just a person and a cake. What the computer doesn't see is that this is a special Italian cake that's only served during Easter time. The boy is wearing his favorite t-shirt given to him as a gift by his father after a trip to Sydney, and you and I can all tell how happy he is and what's exactly on his mind at that moment.

计算机无法理解的是: 这是一个特殊的意大利蛋糕, 它只在复活节限时供应。 而这个男孩穿着的 是他最喜欢的T恤衫, 那是他父亲去悉尼旅行时 带给他的礼物。 另外,你和我都能清楚地看出, 这个小孩有多高兴,以及这一刻在想什么。

This is my son Leo. On my quest for visual intelligence, I think of Leo constantly and the future world he will live in. When machines can see, doctors and nurses will have extra pairs of tireless eyes to help them to diagnose and take care of patients. Cars will run smarter and safer on the road. Robots, not just humans, will help us to brave the disaster zones to save the trapped and wounded. We will discover new species, better materials, and explore unseen frontiers with the help of the machines.

这是我的儿子Leo。 在我探索视觉智能的道路上, 我不断地想到Leo 和他未来将要生活的那个世界。 当机器可以“看到”的时候, 医生和护士会获得一双额外的、 不知疲倦的眼睛, 帮他们诊断病情、照顾病人。 汽车可以在道路上行驶得 更智能、更安全。 机器人,而不只是人类, 会帮我们救助灾区被困和受伤的人员。 我们会发现新的物种、更好的材料, 还可以在机器的帮助下 探索从未见到过的前沿地带。

Little by little, we're giving sight to the machines. First, we teach them to see. Then, they help us to see better. For the first time, human eyes won't be the only ones pondering and exploring our world. We will not only use the machines for their intelligence, we will also collaborate with them in ways that we cannot even imagine.

一点一点地, 我们正在赋予机器以视力。 首先,我们教它们去“看”。 然后,它们反过来也帮助我们, 让我们看得更清楚。 这是第一次,人类的眼睛不再 独自地思考和探索我们的世界。 我们将不止是“使用”机器的智力, 我们还要以一种从未想象过的方式, 与它们“合作”。 我所追求的是:

This is my quest: to give computers visual intelligence and to create a better future for Leo and for the world.

赋予计算机视觉智能, 并为Leo和这个世界, 创造出更美好的未来。 谢谢。

Thank you.

(掌声)

(Applause)