Let me show you something.

Dopustite da vam pokažem nešto

(Video) Girl: Okay, that's a cat sitting in a bed. The boy is petting the elephant. Those are people that are going on an airplane. That's a big airplane.

[Video] Djevojka: Dobro, to je mačka koja sjedi na krevetu. Dječak mazi slona. Ovo su ljudi koji idu u avion. To je veliki avion.

Fei-Fei Li: This is a three-year-old child describing what she sees in a series of photos. She might still have a lot to learn about this world, but she's already an expert at one very important task: to make sense of what she sees. Our society is more technologically advanced than ever. We send people to the moon, we make phones that talk to us or customize radio stations that can play only music we like. Yet, our most advanced machines and computers still struggle at this task. So I'm here today to give you a progress report on the latest advances in our research in computer vision, one of the most frontier and potentially revolutionary technologies in computer science.

Fei-Fei Li: Ovo je trogodišnje dijete koje opisuje što vidi na ovim slikama. Iako ima još dosta toga što mora naučiti o svijetu već je ekspert u nečemu važnom: razumije što vidi. Naše društvo je tehnološki naprednije no ikada. Šaljemo ljude na mjesec, izrađujemo telefone koji pričaju s nama i prilagođene radio stanice koje puštaju samo glazbu koju volimo. Ipak, naši najnapredniji uređaj i računala imaju poteškoća s ovim zadatkom. Ovdje sam danas kako bi vas izvijestila o najnovijim dostignućima u istraživanju računalnog vida, jednoj od glavnih i potencijalno revolucionarnih tehnologija računarstva.

Yes, we have prototyped cars that can drive by themselves, but without smart vision, they cannot really tell the difference between a crumpled paper bag on the road, which can be run over, and a rock that size, which should be avoided. We have made fabulous megapixel cameras, but we have not delivered sight to the blind. Drones can fly over massive land, but don't have enough vision technology to help us to track the changes of the rainforests. Security cameras are everywhere, but they do not alert us when a child is drowning in a swimming pool. Photos and videos are becoming an integral part of global life. They're being generated at a pace that's far beyond what any human, or teams of humans, could hope to view, and you and I are contributing to that at this TED. Yet our most advanced software is still struggling at understanding and managing this enormous content. So in other words, collectively as a society, we're very much blind, because our smartest machines are still blind.

Imamo prototipe auta koji se sami voze, ali bez pametnog vida, ne mogu zapravo vidjeti razliku između zgužvane papirnate vrećice na putu, koju mogu pregaziti, i kamena te veličine koji treba izbjeći. imamo odlične megapikselne kamere, ali nismo dali vid slijepima. Dronovi mogu letjeti vrlo daleko ali nemaju dovoljno tehnologije vida da nam pomognu pratiti promjene u kišnim šumama Sigurnosne kamere su svugdje, ali ne upozoravaju nas kada se dijete utaplja u bazenu. Slike i videi postaju integralni dio globalnog života. Stvaraju se brzinom koja je daleko veća od od one koji bi čovjek ili timovi ljudi željeli vidjeti, a vi i ja pridonosimo tome ovdje na TED-u. Ipak naš najnapredniji softver se i dalje muči oko razumjevanja i upravljanja tog ogromnog sadržaja. Drugim riječima, zajedno kao društvo, poprilično smo slijepi, jer su naši najpametniji uređaji i dalje slijepi.

"Why is this so hard?" you may ask. Cameras can take pictures like this one by converting lights into a two-dimensional array of numbers known as pixels, but these are just lifeless numbers. They do not carry meaning in themselves. Just like to hear is not the same as to listen, to take pictures is not the same as to see, and by seeing, we really mean understanding. In fact, it took Mother Nature 540 million years of hard work to do this task, and much of that effort went into developing the visual processing apparatus of our brains, not the eyes themselves. So vision begins with the eyes, but it truly takes place in the brain.

"Zašto je to tako teško?", možda se pitate. Kamere mogu fotografirati slike poput ove pretvarajući svjetlost u dvodimenzionalne redove brojeva poznate kao pikseli, ali to su samo beživotni brojevi. Ne nose smisao u sebi. Jednako kao što slušati ne znači isto što i čuti, fotografirati sliku nije isto što i vidjeti, a pod vidjeti mislimo na razumijevanje. Zapravo, prirodi je bilo potrebno 540 milijuna godina teškog posla da to uspije, a većina tog posla otišla je u razvijanje uređaja za obradu vida u našem mozgu, ne u samim očima. Vid započinje s očima, ali zapravo se sve događa u mozgu.

So for 15 years now, starting from my Ph.D. at Caltech and then leading Stanford's Vision Lab, I've been working with my mentors, collaborators and students to teach computers to see. Our research field is called computer vision and machine learning. It's part of the general field of artificial intelligence. So ultimately, we want to teach the machines to see just like we do: naming objects, identifying people, inferring 3D geometry of things, understanding relations, emotions, actions and intentions. You and I weave together entire stories of people, places and things the moment we lay our gaze on them.

Već 15 godina, započevši od mog doktorata u Caltech-u i zatim vodeći Stanfordov laboratorij za vid, radila sam s mentorima, suradnicima i studentima kako bi naučili računala da vide. Naše polje se zove računarni vid i strojno učenje. Dio je većeg polja umjetne inteligencije. Naposljetku, želimo naučiti uređaje da vide kao što mi vidimo: imenovanje objekata, prepoznavanje ljudi, razumjevanje trodimenzionalnosti objekata, razumjevanje odnosa, emocija akcija i namjera. Vi i ja vidimo cijele priče ljudi, mjesta i stvari u trenutku kada ih pogledamo.

The first step towards this goal is to teach a computer to see objects, the building block of the visual world. In its simplest terms, imagine this teaching process as showing the computers some training images of a particular object, let's say cats, and designing a model that learns from these training images. How hard can this be? After all, a cat is just a collection of shapes and colors, and this is what we did in the early days of object modeling. We'd tell the computer algorithm in a mathematical language that a cat has a round face, a chubby body, two pointy ears, and a long tail, and that looked all fine. But what about this cat? (Laughter) It's all curled up. Now you have to add another shape and viewpoint to the object model. But what if cats are hidden? What about these silly cats? Now you get my point. Even something as simple as a household pet can present an infinite number of variations to the object model, and that's just one object.

Prvi korak do ovog cilja je naučiti računala da vide objekte, građevne jedinice vizualnog svijeta. U svom najjednostavnijem obliku, zamislite ovaj proces učenja kao pokazivanje računalu raznih prizora za trening određenog objekta, recimo mačaka, i dizajniranje modela koji uči iz ovih prikaza za . Koliko teško to može biti? Nakon svega, mačka je samo skup oblika i boja, i ovo je ono što smo radili u početcima modeliranja objekta. Napisali bi računalu algoritme u matematičkom jeziku da mačka ima okruglo lice, debeljuškasto tijelo, dva šiljata uha i dugačak rep, i da izgleda lijepo. ali što je s ovom mačkom? (Smijeh) Sva je izvijena. Sad morate dodati drugi oblik i pogled modelnom objektu. Što ako su mačke skrivene? Što je sa smiješnim mačkama? Sad vidite što želim reći. Čak i nešto jednostavno poput kućnog ljubimca može imati beskonačan broj varijacija modelnog objekta, i to je samo jedan objekt.

So about eight years ago, a very simple and profound observation changed my thinking. No one tells a child how to see, especially in the early years. They learn this through real-world experiences and examples. If you consider a child's eyes as a pair of biological cameras, they take one picture about every 200 milliseconds, the average time an eye movement is made. So by age three, a child would have seen hundreds of millions of pictures of the real world. That's a lot of training examples. So instead of focusing solely on better and better algorithms, my insight was to give the algorithms the kind of training data that a child was given through experiences in both quantity and quality.

Prije osam godina, vrlo jednostavno i duboko zapažanje promjenilo mi je razmišljanje. Nitko ne govori djetetu kako da vidi, posebno u ranijim godinama. Oni to uče kroz iskustvo i primjere iz stvarnog svijeta. Ako smatrate dječje oči parom bioloških kamera, one fotografiraju svakih 200 milisekundi, prosječno vrijeme koliko je potrebno za pokret oka. Do svoje treće godine, dijete bi vidjelo stotine milijuna slika stvarnog svijeta. To je puno primjera za vježbu. Umjesto fokusiranja samo na sve bolje i bolje algoritme, mislila sam dati algoritmima nekakakve podatke za vježbu koje je dijete dobijalo kroz iskustva i to kvantitativno i kvalitativno.

Once we know this, we knew we needed to collect a data set that has far more images than we have ever had before, perhaps thousands of times more, and together with Professor Kai Li at Princeton University, we launched the ImageNet project in 2007. Luckily, we didn't have to mount a camera on our head and wait for many years. We went to the Internet, the biggest treasure trove of pictures that humans have ever created. We downloaded nearly a billion images and used crowdsourcing technology like the Amazon Mechanical Turk platform to help us to label these images. At its peak, ImageNet was one of the biggest employers of the Amazon Mechanical Turk workers: together, almost 50,000 workers from 167 countries around the world helped us to clean, sort and label nearly a billion candidate images. That was how much effort it took to capture even a fraction of the imagery a child's mind takes in in the early developmental years.

Jednom kada znamo ovo, znali smo da moramo skupiti skup podataka koji ima puno više prikaza no što smo mi imali ikad prije, možda i tisuću puta više, i zajedno s profesorom Kai Li na sveučilištu Princeton, 2007. lansirali smo ImageNet projekt. Sva sreća nismo morali montirati kamere na naše glave i čekati godinama. Otišli smo na Internet, najveću riznicu slika koju je čovječanstvo stvorilo. skinuli smo skoro milijardu slika i koristili crowdsourcing tehnologiju poput platforme Amazon Mechanical Turk da označimo te prikaze. Kako je raslo, ImageNet je bio jedan od najvećih poslodavaca radnika Amazon Mechanical Turk-a: zajedno, skoro 50.000 radnika iz 167 država svijeta pomoglo nam je da očistimo, sortiramo i označimo skoro milijardu korisnih prikaza. Toliko truda je trebalo da se uhvati dio prikaza koje djetetov um uhvati u ranim godinama razvoja.

In hindsight, this idea of using big data to train computer algorithms may seem obvious now, but back in 2007, it was not so obvious. We were fairly alone on this journey for quite a while. Some very friendly colleagues advised me to do something more useful for my tenure, and we were constantly struggling for research funding. Once, I even joked to my graduate students that I would just reopen my dry cleaner's shop to fund ImageNet. After all, that's how I funded my college years.

Na očigled, ova ideja korištenja mnogo podataka da se istreniraju računalni algoritmi se možda sada čini očiglednim, ali 2007., nije bilo tako očigledno. Prilično dugo bili smo poprilično sami na tom putu. Neke prijateljski nastrojene kolege su me savjetovale da radim nešto korisnije, i cijelo vrijeme smo se borili za financiranje istraživanja. Jednom, sam se čak našalila sa studentima da ću ponovno otvoriti kemijsku čistionicu kako bih mogla financirati ImageNet. Naposljetku, tako sam financirala svoj studij.

So we carried on. In 2009, the ImageNet project delivered a database of 15 million images across 22,000 classes of objects and things organized by everyday English words. In both quantity and quality, this was an unprecedented scale. As an example, in the case of cats, we have more than 62,000 cats of all kinds of looks and poses and across all species of domestic and wild cats. We were thrilled to have put together ImageNet, and we wanted the whole research world to benefit from it, so in the TED fashion, we opened up the entire data set to the worldwide research community for free. (Applause)

Nastavili smo dalje. 2009. ImageNet je dosegao bazu podataka od 15 milijuna prikaza preko 22.000 klasa objekata i stvari organiziranih u svakodnevne engleske riječi. I po kvantiteti i po kvaliteti ovo je dosad nedostignuta skala. Kao primjer, u slučaju mačaka, imamo više od 62.000 mačaka u svim oblicima i pozama, i različitih vrsta domaćih i divljih mačaka. Bili smo oduševljeni što smo sastavili ImageNet, i htjeli smo da cijeli znanstveni svijet ima koristi od njega, tako da smo po modi TED-a otvorili cijeli skup podataka svim istraživačkim zajednicama, besplatno. (Pljesak)

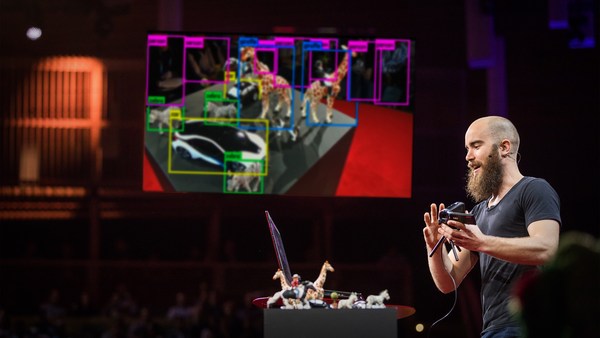

Now that we have the data to nourish our computer brain, we're ready to come back to the algorithms themselves. As it turned out, the wealth of information provided by ImageNet was a perfect match to a particular class of machine learning algorithms called convolutional neural network, pioneered by Kunihiko Fukushima, Geoff Hinton, and Yann LeCun back in the 1970s and '80s. Just like the brain consists of billions of highly connected neurons, a basic operating unit in a neural network is a neuron-like node. It takes input from other nodes and sends output to others. Moreover, these hundreds of thousands or even millions of nodes are organized in hierarchical layers, also similar to the brain. In a typical neural network we use to train our object recognition model, it has 24 million nodes, 140 million parameters, and 15 billion connections. That's an enormous model. Powered by the massive data from ImageNet and the modern CPUs and GPUs to train such a humongous model, the convolutional neural network blossomed in a way that no one expected. It became the winning architecture to generate exciting new results in object recognition. This is a computer telling us this picture contains a cat and where the cat is. Of course there are more things than cats, so here's a computer algorithm telling us the picture contains a boy and a teddy bear; a dog, a person, and a small kite in the background; or a picture of very busy things like a man, a skateboard, railings, a lampost, and so on. Sometimes, when the computer is not so confident about what it sees, we have taught it to be smart enough to give us a safe answer instead of committing too much, just like we would do, but other times our computer algorithm is remarkable at telling us what exactly the objects are, like the make, model, year of the cars.

Sad kad imamo podatke da opskrbimo mozgove naših računala, spremni smo vratiti se na same algoritme. Ispalo je kako je bogatstvo informacija s ImageNet-a savršeno za određene vrste algoritama za strojno učenje koji se zovu konvolucijske neuronske mreže osmišljene od strane Kunihiko Fukushime, Geoff Hintona i Yann LeCuna davnih 1970-ih i 1980-ih. Upravo kako se mozak sastoji od milijardu vrlo povezanih neurona, osnovna operacijska jedinica neuronskih mreža jest čvor sličan neuronu. Prima podatke od drugih čvorova i šalje ih drugima. Ove stotine tisuća ili čak milijuni čvorova su organizirani po hijerarhijskim slojevima sličnim onima u mozgu. U tipičnoj neuralnoj mreži koju koristimo u učenju prepoznavanja modela, ima 24 milijuna čvorova, 140 milijuna parametara, i 15 milijardi veza. To je ogroman model. Upogonjen je s mnoštvom podataka s ImageNet-a te modernih CPJ-a i GPJ-a kako bi istrenirao ove ogrome modele, skupna neuronska mreža je procvala na način koji nitko nije očekivao. Postala je ključna struktura koja je dovodila do novih uzbudljivih rezultata u prepoznavanju objekata. Ovo je računalo koje nam govori da je na slici mačka i gdje je mačka. Naravno ne radi se samo o mački, ovdje nam računalni algoritam govori da slika sadrži dječaka i medvjedića; psa, osobu i malog zmaja u pozadini; ili slika vrlo zbrkanih stvari poput čovjeka, skateboarda, ograde, lampe itd. Ponekad kada računalo nije sigurno što vidi, moramo ga naučiti da bude dovoljno pametno da nam pruži siguran odgovor, kao što bismo mi odgovorili, ali u drugim slučajevima računalni alogoritam nam besprijekorno kaže što su točno ti objekti, poput materijala, modela, godine auta.

We applied this algorithm to millions of Google Street View images across hundreds of American cities, and we have learned something really interesting: first, it confirmed our common wisdom that car prices correlate very well with household incomes. But surprisingly, car prices also correlate well with crime rates in cities, or voting patterns by zip codes.

Primjenili smo ovaj algoritam na milijune Google Street View prikaza u stotinama američkih gradova, i spoznali smo nešto vrlo zanimljivo: prvo, potvrdilo se staro pravilo da cijene auta dobro koreliraju s kućnim primanjima. Ali isto tako cijene auta koreliraju također sa stopom kriminala u gradovima, ili načina glasanja po poštanskom broju.

So wait a minute. Is that it? Has the computer already matched or even surpassed human capabilities? Not so fast. So far, we have just taught the computer to see objects. This is like a small child learning to utter a few nouns. It's an incredible accomplishment, but it's only the first step. Soon, another developmental milestone will be hit, and children begin to communicate in sentences. So instead of saying this is a cat in the picture, you already heard the little girl telling us this is a cat lying on a bed.

Čekajte. Je li to, to? Je li nas računalo već sustigao ili čak prestiglo u našim sposobnostima? Ne tako brzo. Zasad smo samo naučili računalo da vidi objekte. To je kao da malo dijete učite reći nekoliko imenica. To je ogromno postignuće, ali je to tek prvi korak. Uskoro će drugo razvojno postignuće biti dosegnuto, i djeca počinju komunicirati u rečenicama. Stoga umjesto govorenja kako je mačka na slici, već ste čuli malu djevojčicu koja govori da mačka leži na krevetu.

So to teach a computer to see a picture and generate sentences, the marriage between big data and machine learning algorithm has to take another step. Now, the computer has to learn from both pictures as well as natural language sentences generated by humans. Just like the brain integrates vision and language, we developed a model that connects parts of visual things like visual snippets with words and phrases in sentences.

Kako bi naučili računalo da vidi sliku i stvori rečenice, brak između velikih podataka i algoritama strojnog učenja mora ići korak dalje. Računalo mora naučiti učiti i iz slika i iz prirodnih jezičnih rečenica stvorenih od strane ljudi. Upravo kako mozak integrira vid i jezik, razvili smo model koji spaja vidljive dijelove poput vidnih komada s riječima i frazama u rečenicama.

About four months ago, we finally tied all this together and produced one of the first computer vision models that is capable of generating a human-like sentence when it sees a picture for the first time. Now, I'm ready to show you what the computer says when it sees the picture that the little girl saw at the beginning of this talk.

Otprilike prije četiri mjeseca, konačno smo uspjelo sve povezati i proizveli smo jedan od prvih modela računalnog vida koji je sposoban stvoriti rečenicu sličnu ljudskoj kada vidi sliku po prvi puta. Pokazat ću vam što računalo kaže kada vidi slike koje je mala djevojčica vidjela na početku govora.

(Video) Computer: A man is standing next to an elephant. A large airplane sitting on top of an airport runway.

(Video) Računalo: Čovjek stoji pored slona. Veliki avion sjedi na vrhu avionske piste.

FFL: Of course, we're still working hard to improve our algorithms, and it still has a lot to learn. (Applause)

FFL: Naravno, i dalje se trudimo unaprijediti naše algoritme, i još puno toga mora naučiti. (Pljesak)

And the computer still makes mistakes.

I računalo i dalje pravi greške.

(Video) Computer: A cat lying on a bed in a blanket.

(Video) Računalo: Mačka leži na krevetu u deci.

FFL: So of course, when it sees too many cats, it thinks everything might look like a cat.

FFL: Naravno, kada vidi previše mačaka, misli da bi sve moglo izgledati kao mačka.

(Video) Computer: A young boy is holding a baseball bat. (Laughter)

(Video) Računalo: Dječak drži bejzbolsku palicu. (Smijeh)

FFL: Or, if it hasn't seen a toothbrush, it confuses it with a baseball bat.

FFL: Ili, ako nije vidio četkicu za zube, pomiješat će je s bejzbolskom palicom.

(Video) Computer: A man riding a horse down a street next to a building. (Laughter)

(Video) Računalo: Čovjek jaše konja niz ulicu pored zgrade. (Smijeh)

FFL: We haven't taught Art 101 to the computers.

FFL: Nismo računalo naučili neke osnove umjetnosti.

(Video) Computer: A zebra standing in a field of grass.

(Video) Računalo: Zebra stoji u polju trave.

FFL: And it hasn't learned to appreciate the stunning beauty of nature like you and I do.

FFL: I nije naučio diviti se prekrasnoj ljepoti prirode kao vi i ja.

So it has been a long journey. To get from age zero to three was hard. The real challenge is to go from three to 13 and far beyond. Let me remind you with this picture of the boy and the cake again. So far, we have taught the computer to see objects or even tell us a simple story when seeing a picture.

Bilo je to dugo putovanje. Od rođenja do treće godine je bilo teško. Pravi izazov je doći od treće do trinaeste godine, i dalje. Podsjetit ću vas s opet s ovom slikom dječaka i kolača. Dosad smo naučili računalo da vidi objekte ili čak nam kaže jednostavnu priču onoga što je na slici.

(Video) Computer: A person sitting at a table with a cake.

(Video) Računalo: Osoba sjedi za stolom s kolačem.

FFL: But there's so much more to this picture than just a person and a cake. What the computer doesn't see is that this is a special Italian cake that's only served during Easter time. The boy is wearing his favorite t-shirt given to him as a gift by his father after a trip to Sydney, and you and I can all tell how happy he is and what's exactly on his mind at that moment.

FFL: Ali postoji puno više na ovoj slici nego samo osoba i kolač. Što računalo ne vidi jest da je to poseban talijanski kolač koji se jedino servira za vrijeme Uskrsa. Dječak nosi svoju omiljenu majicu koju je dobio od oca nakon putovanja u Sidney, i vi i ja možemo reći da je jako stretan i što je na njegovom umu u ovom trenu.

This is my son Leo. On my quest for visual intelligence, I think of Leo constantly and the future world he will live in. When machines can see, doctors and nurses will have extra pairs of tireless eyes to help them to diagnose and take care of patients. Cars will run smarter and safer on the road. Robots, not just humans, will help us to brave the disaster zones to save the trapped and wounded. We will discover new species, better materials, and explore unseen frontiers with the help of the machines.

To je moj sin Leo. Na mom pohodu na vidnu inteligenciju, razmišljam o Leu konstantno i budućnosti u kojoj će živjeti. Kada uređaji vide, doktori i sestre će imati dodatan par neumornih očiju koje im pomažu dijagnosticirati i pobrinuti se za pacijenta. Auti će voziti pametnije i sigurnije na putu. Roboti, ne samo ljudi, će pomoći u opasnim situacijama kako bi spasili zatočene i ozljeđene. Otkrit ćemo nove vrste, bolje materijale, i istražiti neviđene granice uz pomoć uređaja.

Little by little, we're giving sight to the machines. First, we teach them to see. Then, they help us to see better. For the first time, human eyes won't be the only ones pondering and exploring our world. We will not only use the machines for their intelligence, we will also collaborate with them in ways that we cannot even imagine.

Malo po malo, dajemo vid uređajima. Prvo, smo ih naučili da vide. Onda nam oni pomažu vidjeti bolje. Po prvi put, ljudsko oko neće biti jedino koje gleda i istražuje svijet. Nećemo koristiti uređaje zbog njihove inteligencije, surađivat ćemo s njima na načine koje ne možemo zamisliti.

This is my quest: to give computers visual intelligence and to create a better future for Leo and for the world.

Ovo je moj pothvat: dati računalima vidnu inteligenciju i stvoriti bolje sutra za Lea i za svijet.

Thank you.

Hvala vam.

(Applause)

(Pljesak)